AI/ML Operations

Logistic Regression for Classification: Concept, Sigmoid Function, Cost Function, and Implementation

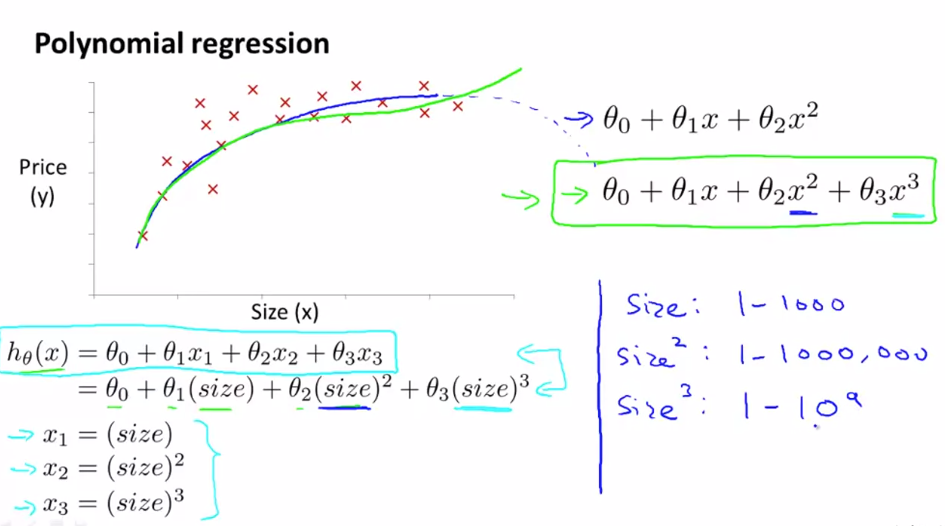

📈 Polynomial Regression

Polynomial regression is an extension of linear regression where we model a nonlinear relationship between input and output by adding powers of .

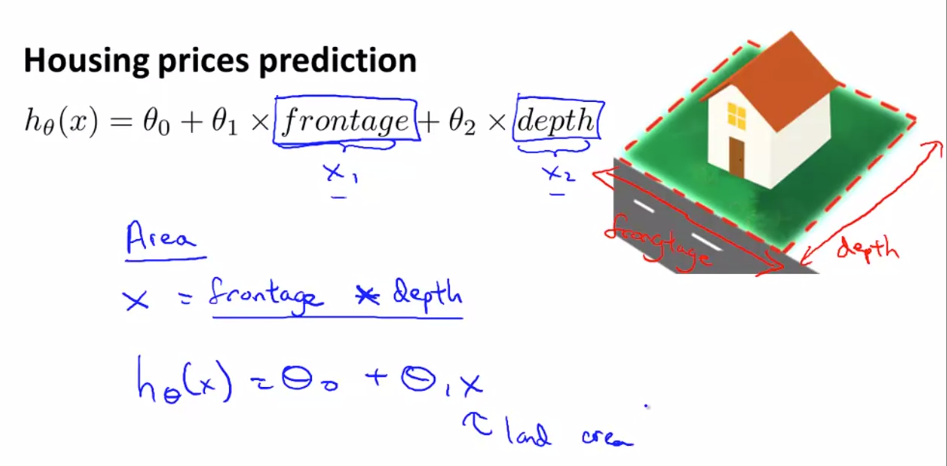

Sometimes by defining a new feature you might get a better Model that requires less computation

Use case:

When prediction fits Polynomial equation instead of linear equation

For example house price can defined by calculating area instead of creating 2 variable equation we can define one variable equation :

- Scaling of feature becomes crucial in Polynomial Regression

- Some algo can choose feature to fit polynomial curves

- Feature engineering is the process of creating new features from existing ones to improve model performance.

Generic polynomial regression model of degree n: