Anomaly Detection Using Multivariate Gaussian Distribution

Learn anomaly detection using multivariate Gaussian distribution to identify unusual patterns and correlated outliers in datasets. Understand covariance matrices, parameter estimation, probability density functions, and threshold-based anomaly detection techniques used in machine learning systems.

Anomaly Detection Using Gaussian Distribution in Machine Learning

Recommender Systems: Collaborative Filtering, Content-Based Filtering, and Hybrid Approaches

Multivariate Gaussian Distribution

The multivariate Gaussian distribution is an extension of the normal (Gaussian) distribution to multiple variables.

It is commonly used in:

- anomaly detection

- probabilistic modeling

- Gaussian mixture models

- Bayesian ML

- Kalman filters

Motivation

Suppose we are monitoring machines in a data center.

Features:

| Feature | Meaning |

|---|---|

| CPU Load | |

| Memory Usage |

Most machines behave normally:

High CPU ↔ High Memory

Low CPU ↔ Low Memory

The features are correlated.

Problem with Basic Gaussian Anomaly Detection

Earlier anomaly detection assumed:

meaning:

- features are independent

Why This Fails

Suppose we observe:

CPU Load = Very Low

Memory Use = Very High

Individually:

- CPU value looks normal

- Memory value looks normal

But together:

- this combination is strange

Visual Intuition

Normal data may lie along a diagonal trend:

Low CPU → Low Memory

High CPU → High Memory

An anomalous point:

Low CPU + High Memory

lies far away from the normal pattern.

Basic Gaussian fails because it ignores feature correlation.

Solution: Multivariate Gaussian

Instead of modeling each feature separately:

we model:

all together.

Multivariate Gaussian Formula

The probability density is:

No need to memorize this formula.

Parameters

1. Mean Vector

Represents the center of the distribution.

Example:

means:

- centered at origin

2. Covariance Matrix

An matrix describing:

- variances

- correlations

Covariance Matrix Structure

For 2 features:

1. Diagonal Entries

Represent variance of each feature.

Example:

means:

- both features have equal variance

- no correlation

2. Off-Diagonal Entries

Represent correlation between features.

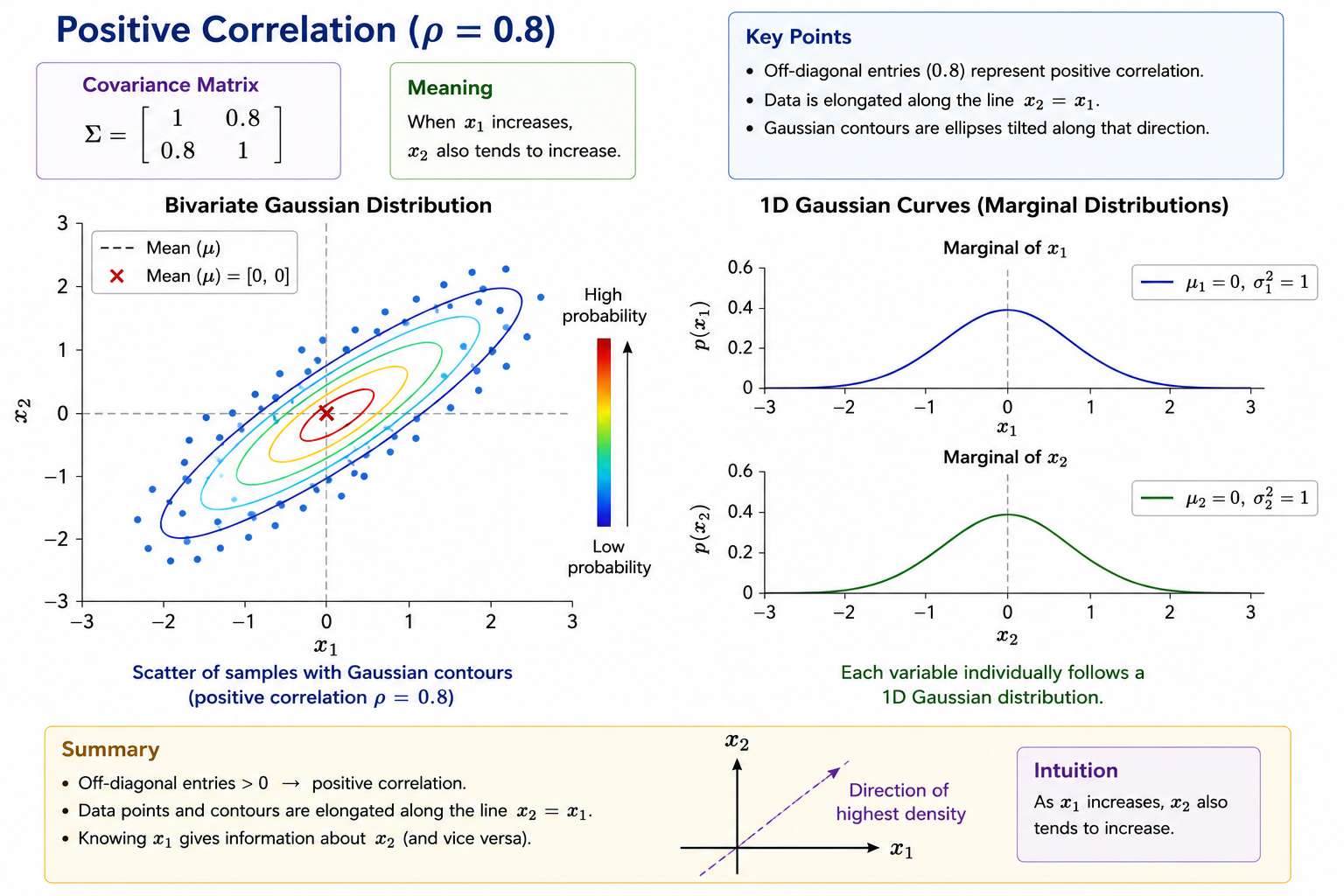

Positive Correlation

Example:

Meaning:

When x1 increases

x2 also tends to increase

Probability mass lies near:

Visual Shape

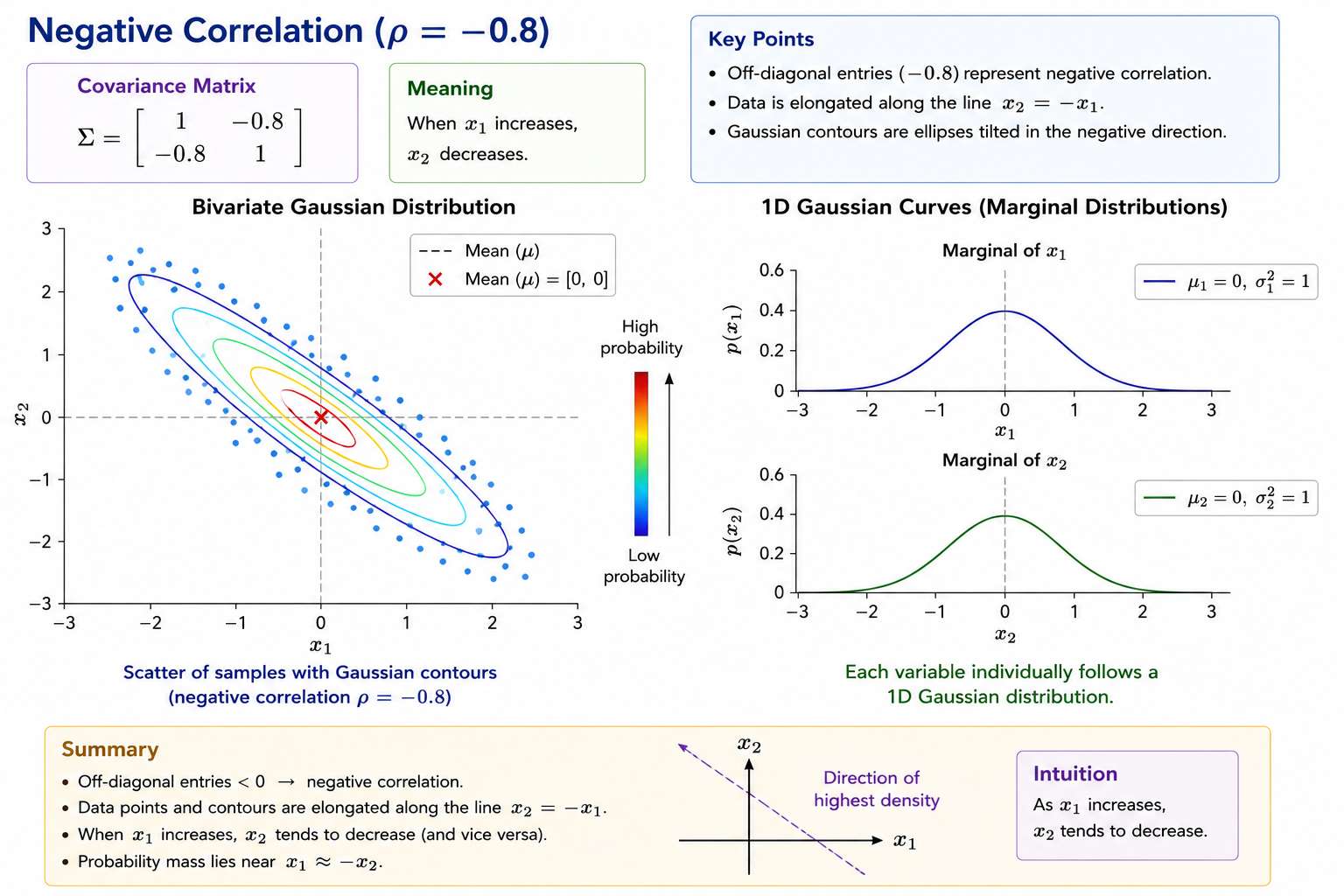

Negative Correlation

Example:

Meaning:

When x1 increases

x2 decreases

Probability mass lies near:

Visual Shape

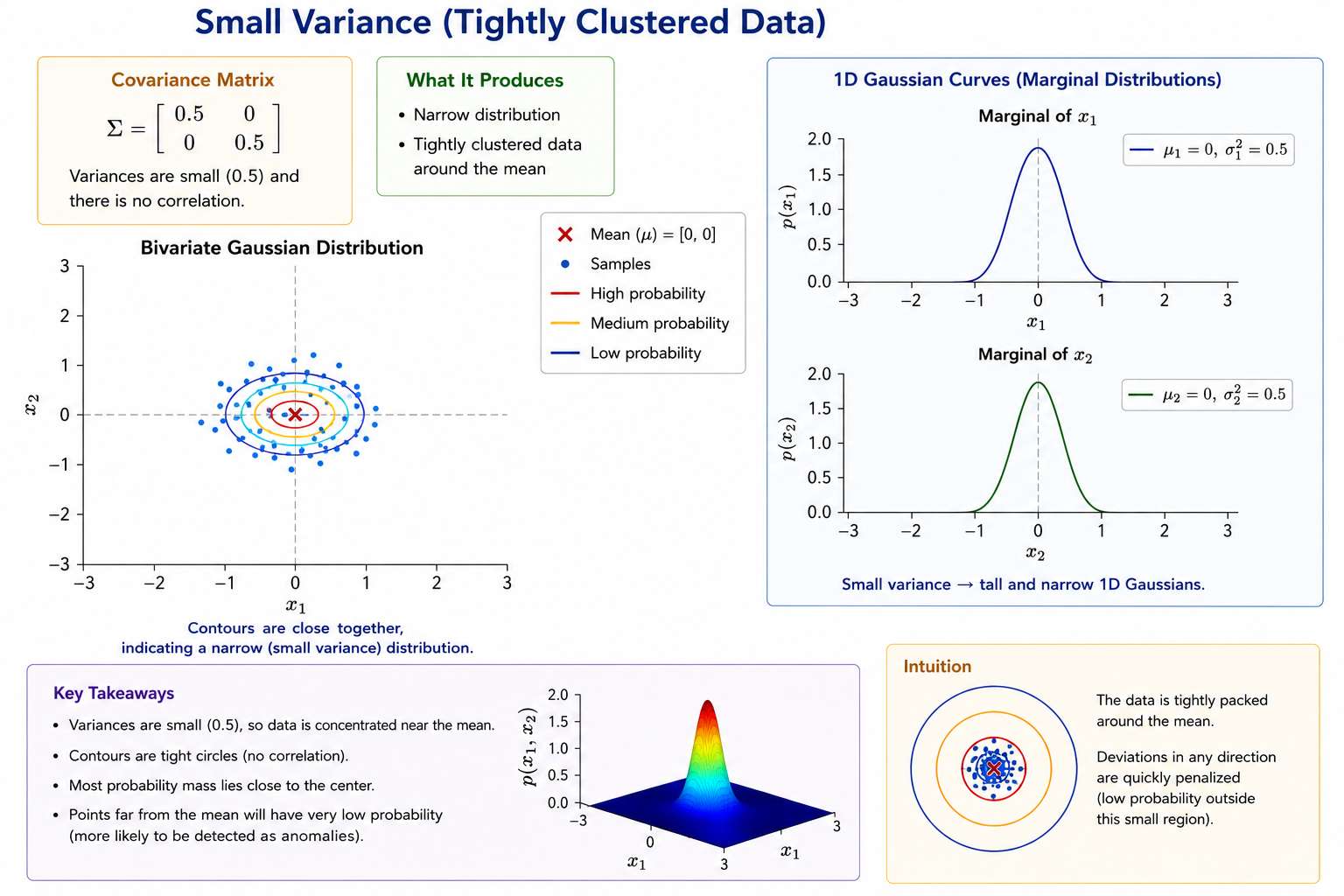

3. Effect of Variance

Small Variance

Produces:

- narrow distribution

- tightly clustered data

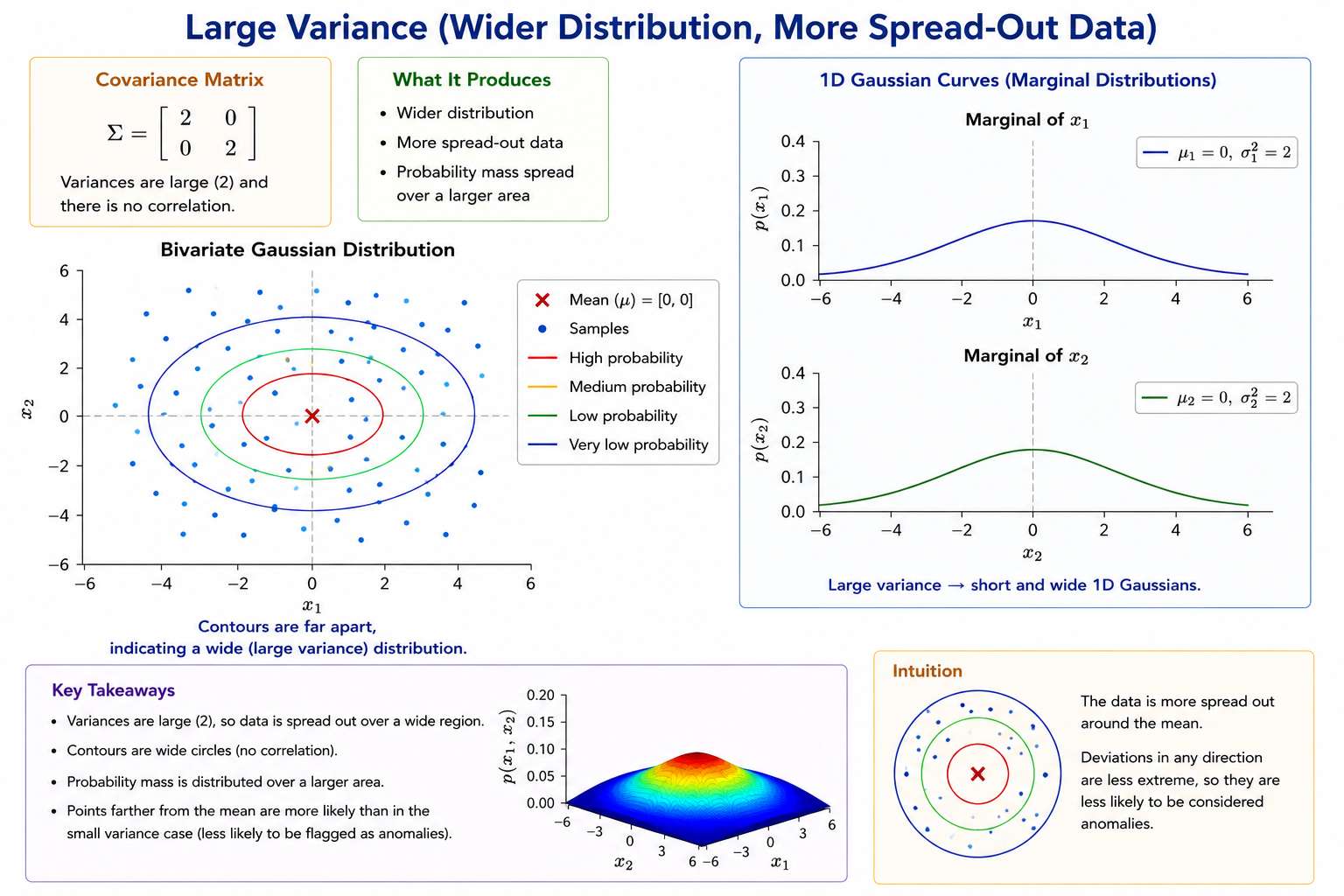

Large Variance

Produces:

- wider distribution

- more spread-out data

Geometric Interpretation

Multivariate Gaussian creates:

Elliptical Probability Regions

High Probability

↓

****

********

**********

********

****

Points near center:

- high probability

Points far away:

- low probability

Anomaly Detection

Compute:

If:

then:

x is an anomaly

Why Multivariate Gaussian Is Better

Basic Gaussian:

- assumes features independent

Multivariate Gaussian:

- models correlations

This helps detect anomalies like:

Low CPU + High Memory

even if each feature individually looks normal.

Comparison

| Method | Assumes Independence? | Captures Correlation? |

|---|---|---|

| Basic Gaussian | Yes | No |

| Multivariate Gaussian | No | Yes |

Visual Comparison

Basic Gaussian

Circular contours

Assumes equal independent spread.

Multivariate Gaussian

Tilted elliptical contours

Captures relationships between variables.

Mean Shifts Distribution

Changing:

moves the center.

Example:

shifts peak to:

x1 = 1.5

x2 = -0.5

Complete Anomaly Detection Pipeline

flowchart TD

A[Collect Normal Data]

--> B[Estimate μ and Σ]

--> C[Compute p(x)]

--> D[{p(x) < ε ?}]

-->|Yes| E[Anomaly]

-->|No| F[Normal Example]

Advantages

###aptures Correlations

Very important for real-world systems.

More Expressive

Can model:

- tilted distributions

- elongated regions

- correlated variables

Disadvantages

Needs more data.

Why?

Because covariance matrix has many parameters:

For large (n):

- expensive

- harder to estimate reliably

Practical Rule

Use Basic Gaussian When

- features mostly independent

- small dataset

- high dimensionality

Use Multivariate Gaussian When

- features strongly correlated

- enough training data available